Bild

Foto: Khaled Al Sabbagh

Improving the Performance of Machine Learning-based Methods for Continuous Integration by Handling Noise

Naturvetenskap & IT

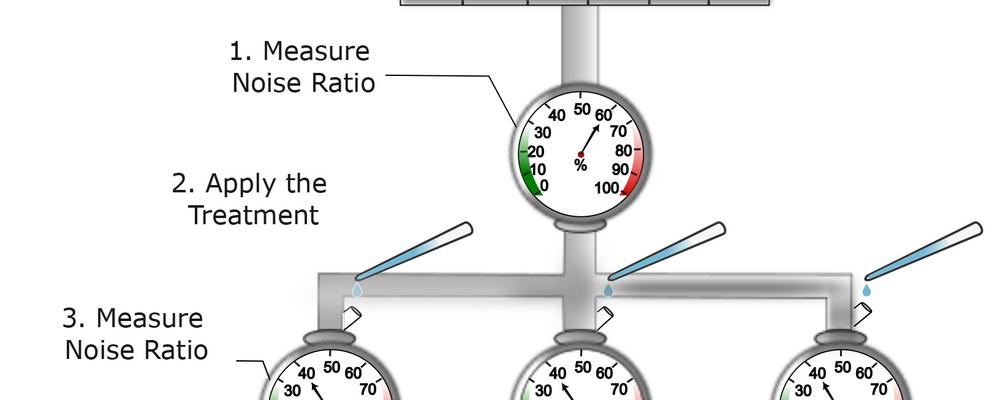

Khaled Al Sabbagh disputerar i ämnet data- och informationsteknik med avhandlingen "Improving the Performance of Machine Learning-based Methods for Continuous Integration by Handling Noise".

Disputation