Cardiobotics - Deep learning for cardiovascular disease detection

Short description

The research group develops deep machine learning methods for automatic interpretation and assessment of cardiac computed tomography and echocardiography images. The aim is to enable fast, accurate and reliable methods for medical image understanding with means of large sets of expert-annotated medical data and state-of-the-art machine learning models. Our research team includes both medical and technical researchers working together like a well-oiled machine: we have engineers and physicians working in close collaboration to make sure that we develop novel deep machine learning models trained and evaluated on high-quality images and annotations.

Automatic tools with the ability to make fast, accurate and reliable interpretation and assessment of medical images are highly requested by medical research as well as clinical care. In recent years, deep machine learning has proved to excel at medical image analysis tasks. We aim to enable automatic tools for medical image understanding based on state-of-the-art deep machine learning models learned by means of large sets of expert-annotated medical data.

Medical imaging, that is, tools for producing visual representations of the human body, allows scientists and clinicians to examine, diagnose and treat diseases. Medical images, acquired with techniques such as ultrasound and computed tomography, provide non invasive information essential for understanding and modeling tissue and organ anatomy and function. Decades of successful development of imaging techniques have brought an increased image quality capturing fine anatomical and functional details while the amount of images acquired on a daily basis is steadily growing. The demand for automatic tools for analysis has increased along this development, since manual techniques for inspection cannot effectively and accurately process the huge amount of image data.

The last couple of decades, the field of machine learning has provided a specific set of algorithms excelling at computer vision tasks: deep machine learning models. These deep machine learning models have received a great deal of attention from the medical imaging community as well. Deep machine learning models contain huge amounts of learnable parameters which makes them extremely powerful for modeling complex connections between input and output. However, to learn these models requires large sets of expert-annotated training data.

Deep models for coronary artery segmentation and detection of stenoses

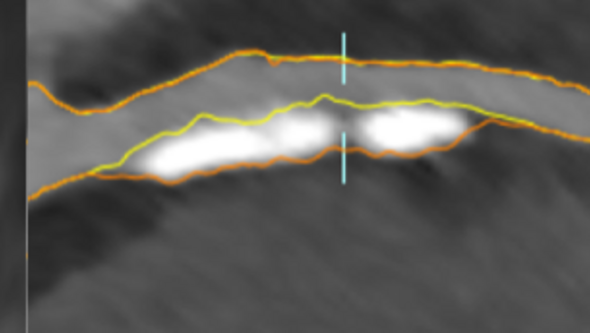

Buildup of atherosclerotic plaques in the coronary arteries, the blood vessels that provide oxygenated blood to the heart, is a biomarker for myocardial infarction (''heart attack''). Plaque rupture is the most common cause of myocardial infarction and the risk of rupture is related to the size and composition of the plaque. Plaques in the vessel wall cause stenosis, a narrowing of the blood vessel, that can be detected and quantified by comparing the width of the lumen and the vessel wall of the coronary arteries. Automatic segmentation of coronary arteries, including lumen, wall and plaque delineations, can help in assessing the risk of myocardial infarction.

Currently, we are manually annotating coronary artery trees in 600 cardiac CTA (computed tomography angiography) images from the SCAPIS dataset. The manual annotations include the lumen and the vessel wall of the main coronary arteries (vessels large enough to be deemed medically relevant) as well as plaques and stenoses. The aim is to train a deep machine learning model able to detect and classify plaques and stenoses with an accuracy comparable to an expert radiologist.

Generalizable deep models for echo assessment

Echocardiography (cardiac ultrasound) is a widely used medical imaging technique for heart function assessment. However, it takes several years to train a physician to become a senior specialist in echocardiography. An automatic echocardiography evaluation software could be of great use in the clinical work: it could work as a gatekeeper to sort out the less complex cases, it could reduce the burden of physicians and it could enable echocardiography assessments at clinic sites with no senior echocardiography specialist. A unique dataset at the Sahlgrenska university hospital, including over 90000 echocardiographic examinations with manual measures and annotations of the size and function of the heart chambers and valves, should allow for training a deep model able to automatically solve some of the most important tasks included in an echocardiographic examination.

Using existing clinical data for training and evaluating a deep model able to perform a diverse set of tasks is of course a challenging problem. The examinations are acquired during different years, by different physicians, from different viewpoints and with means of different scanner types. The assessments vary from volume estimation to valve function classification. Thus, the model must generalize between tasks and between domains. Further, the model should be robust to bad quality examinations, perhaps with missing images or performed by inexperienced physicians. Finally, the memory footprint of each examination and the very size of the dataset put demands on running times and hardware. Developing a deep model that successfully masters these requirements on robustness, generalizability and scalability would open up for other projects using already existing clinical data, instead of relying on new time-consuming manual annotation.

News

Chalmers: AI will soon be able to prewarn of disease

Sveriges Radio: AI kan förutse hjärtinfarkt (in Swedish)

Key publications

- Semi-supervised learning with natural language processing for right ventricle classification in echocardiography-a scalable approach

- High-quality annotations for deep learning enabled plaque analysis in SCAPIS cardiac computed tomography angiography

- A deep multi-stream model for robust prediction of left ventricular ejection fraction in 2D echocardiography